延續上次的進度

這次優化了各個函式

並記錄了一些需要注意的地方

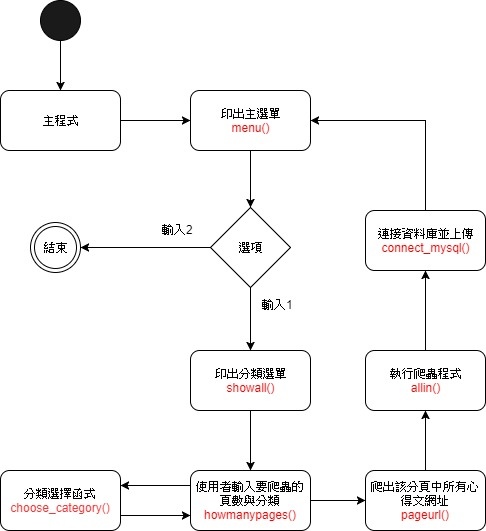

這次總共有七個函式:

1.主程式與主程式選單

2.分類選單

3.使用者輸入要爬蟲的頁數

4.使用者輸入要爬蟲的分類

5.爬出該分類的頁數中所有心得文網址

6.執行爬蟲程式(導入心得文網址)

7.連接資料庫並上傳

簡單化一下流程圖:

1.主程式與主程式選單

主程式選單:

印出給使用者看的選單

主程式:

會導入所有函式需要用到的模組

並宣告各個會用到的全域變數

※全域變數:在每個函式裡面共同使用的變數

#主程式選單

def menu():

print("========urcosme爬蟲========")

print()

print("請輸入選項代號")

print("1.進入分類選單")

print()

print("2.結束")

print()

print()

print("========urcosme爬蟲========")

#以下為主程式

# _*_ coding: utf-8 _*_

from urllib import request #導入模組

from bs4 import BeautifulSoup #導入模組

from urllib.parse import urlparse #導入模組

from urllib.request import urlopen

import pymysql

from urllib.error import HTTPError

import re

global ttt

global imgg

global ct

global ci

global category

while True:

menu()

choice=int(input("請輸入你的選擇:"))

if choice == 2:

break;

elif choice == 1:

showall()

else:

print("請輸入選項的代號")

x = input("請按Enter鍵回主選單")

2.分類選單

先印出給使用者看的選單

最後呼叫howmanypages函式

def showall():

print("========分類選單========")

print("a.基礎保養:1.洗臉 2.卸妝 3.化妝水 4.乳液 5.乳霜 6.凝霜")

print(" 7.凝膠 8.前導 9.精華 10.面膜 11.多功能")

print("b.防曬 :12.臉部防曬 13.身體防曬")

print()

print("c.底妝 :14.妝前 15.遮瑕 16.粉底 17.定妝")

print()

print("d.彩妝 :18.眉彩 19.眼線 20.眼影 21.睫毛 22.頰彩 23.修容")

print(" 24.唇彩 25.美甲 26.多功能彩妝")

print("all.全部")

print()

print(" 說明:請先輸入要爬取的初始與結尾頁數,再輸入要爬取的分類代號")

print(" ,若要爬超過一個分類,所有分類皆會爬取同樣多的頁數")

print()

print()

howmanypages()

3.使用者輸入要爬蟲的頁數

由showall()呼叫出來

category是全域變數,由choose_category()函式取得,所有要使用必須先宣告

讓使用者輸入起始頁數與結束頁數

讓使用者輸入要爬取的分類

用迴圈的方式將使用者自訂的頁數代入

將category()函式帶進來,結合頁數後再呼叫pageurl()函式,進行心得文網址的爬取

def howmanypages():

global category #使用全域變數

pages1 = int(input("請輸入要爬取的初始頁數"))

pages2 = int(input("請輸入要爬取的結尾頁數"))

number = input("請輸入要爬取的分類")

for page in range(pages1,pages2+1):

choose_category(number)

final = ((category)+str(page))

print("爬取的分類:",number)

print("從第",pages1,"頁至第",pages2,"頁")

pageurl(final)

需要注意的地方:

range的用法:

range(x,y,z) x=起始值,y=結束值,z=間隔

結束值並不會被使用,例如range(1,3),只會跑出 1 跟 2

所以結束值需要加上 1 來讓使用者的結尾頁數也能被程式使用到,

一開始輸入的pages2為int(數值)

所以可以在range中做加法

但最後要結合category做字串的相加,所以要再轉換成str後才能相加

4.使用者輸入要爬蟲的分類

目前還沒有想出更好的做好,之後可能還可以再做優化

先宣告category為全域變數,讓其他函式也可以使用

number就是由howmanypages()函式給予的變數,是使用者選擇分類時的輸入

根據number的不同,category對應到該網站不同類別的網址

而如果number為大類別或是全部

則寫一個迴圈,將所有category都跑出來

def choose_category(number):

global category #宣告全域變數

if number == '1':

c_number = 11

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '2':

c_number = 12

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '3':

c_number = 13

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '4':

c_number = 14

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '5':

c_number = 15

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '6':

c_number = 16

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '7':

c_number = 17

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '8':

c_number = 18

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '9':

c_number = 19

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '10':

c_number = 20

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '11':

c_number = 21

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '12':

c_number = 26

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '13':

c_number = 27

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '14':

c_number = 28

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '15':

c_number = 29

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '16':

c_number = 30

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '17':

c_number = 31

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '18':

c_number = 32

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '19':

c_number = 33

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '20':

c_number = 34

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '21':

c_number = 35

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '22':

c_number = 36

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '23':

c_number = 37

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '24':

c_number = 38

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '25':

c_number = 39

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number == '26':

c_number = 40

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number =='a':

c_numbers = ['11','12','13','14','15','16','17','18','19','20','21']

for c_number in c_numbers:

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number =='b':

c_numbers = ['26','27']

for c_number in c_numbers:

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number =='c':

c_numbers = ['28','29','30','31']

for c_number in c_numbers:

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number =='d':

c_numbers = ['32','33','34','35','36','37','38','39','40']

for c_number in c_numbers:

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

elif number =='all':

c_numbers = ['11','12','13','14','15','16','17','18','19','20','21','26','27','28','29','30','31','32','33','34','35','36','37','38','39','40']

for c_number in c_numbers:

category = 'http://www.urcosme.com/tags/{}/reviews?page='.format(c_number)

5.爬出該分類的頁數中所有心得文網址

由howmanypages()呼叫

將獲得的網址代入近來

取得所有該頁面中所有心得文網址

將多個心得文網址放進一個串列

再用迴圈的方式選取心得文網址 呼叫allin()函式 來爬取

def pageurl(cc):

url = cc

user_agent = 'Mozilla/5.0 (Windows; U; Windows NT 6.1; zh-CN; rv:1.9.2.15) Gecko/20110303 Firefox/3.6.15'

headers = {'User-Agent':user_agent}

data_res = request.Request(url=url,headers=headers)

data = request.urlopen(data_res)

data = data.read().decode('utf-8')

sp = BeautifulSoup(data, "lxml") #使用BeautifulSoup函數分析網頁

domain = "http://www.urcosme.com" #由於內容中不包含前面網址,所以先自訂義

all_links = sp.find_all('a') #找出所有a標籤中的內容

morelink = []

for link in all_links: #從a標籤中的內容找出href標籤

href = link.get('href')

if href != None and href.startswith('/reviews/'):#選擇href中 包含有/reviews/的內容

more = domain+href

morelink.append(more)

mls = morelink

for ml in mls:

allin(ml)

6.執行爬蟲程式(導入心得文網址)

宣告各個需要用到的全域變數

爬蟲獲得資料後,呼叫connect_mysql()函式來連接資料庫

1.標籤:

沒有優化

2.主圖片:

對於遇到無圖片的情況,做出了應對

3.內文:

因為資料庫讀取許多特殊表情符號會變成亂碼,所以使用,replace("特殊符號","")的方式消除掉

目前沒有更好的方案,之後還可以再做優化

4.內文圖片:

上次的程式只會存一個網址到資料庫

這次則將所有網址加進一個串列裡面,先將串列轉換成str(字串)

就能用.replace把串列的 [ ' , ' ] 各個符號消除掉,讓資料庫裏面只顯示網址,不會顯示多餘的東西

def allin(linkkk):

global ttt

global imgg

global ct

global ci

url = linkkk #選擇網址

user_agent = 'Mozilla/5.0 (Windows; U; Windows NT 6.1; zh-CN; rv:1.9.2.15) Gecko/20110303 Firefox/3.6.15'

headers = {'User-Agent':user_agent}

data_res = request.Request(url=url,headers=headers)

data = request.urlopen(data_res)

data = data.read().decode('utf-8')

sp = BeautifulSoup(data, "lxml")

#以下為標籤

title = sp.findAll("span",{"itemprop":"name"})

tt = []

for t in title:

tt.append(t)

try:

ttt = tt[4].text

except:

ttt = tt[1].text

print('標籤:',ttt)

#以下為主圖片

print('主圖片連結:')

img1 = sp.find("div",{"class":"main-image"}).findAll("img", src = re.compile("\/review_image\/"))

img2 = sp.find("div",{"class":"main-image"}).findAll("img", src = re.compile("\/product_image\/"))

for img in img1:

if img1 != []:

imgg = img['src']

print(img['src'])

for img in img2:

if img1 != []:

imgg = img['src']

print(img['src'])

if img1 == [] and img2 == []:

imgg = '無圖片'

print('無圖片')

#以下為內文

print('內文:')

contents = sp.findAll("div",{"class":"review-content"})

for content in contents:

print(content.text.replace("😊","").replace("🔺","").replace("😘","").replace("😌","").replace("😍","").replace("😳","").replace("😅","").replace("😆","").replace("✨","").replace("😂","").replace("👍🏻","").replace("😊",""))

ct = content.text.replace("😊","").replace("🔺","").replace("😘","").replace("😌","").replace("😍","").replace("😳","").replace("😅","").replace("😆","").replace("✨","").replace("😂","").replace("👍🏻","").replace("😊","")

#以下為內文圖片

print('內文圖片:')

c_img1 = sp.find("div",{"class":"review-content"}).findAll("img", src = re.compile("\/review"))

cc_img = []

if c_img1 != []: #由於上面的程式就會尋找\/review_image\/,所以在這邊就要先確認是否有找到資料

for c_img in c_img1:

cc_img.append(c_img['src'])

print(str(cc_img[:]).replace("["," ").replace("]"," ").replace("'"," ").replace(", "," ")) #先轉成str 再用replace把額外的符號都換成空白

else:

print('無圖片')

ci = str(cc_img[:]).replace("["," ").replace("]"," ").replace("'"," ").replace(", "," ")

connect_mysql()

7.連接資料庫並上傳

宣告各個全域變數,才能使用剛剛爬蟲程式獲得的內容

根據不同的category,來改變要存入的不同資料庫table

使用fetchall找尋是否已有相同內文,避免重複存入

def connect_mysql():

global category

global ttt

global imgg

global ct

global ci

if category == 'http://www.urcosme.com/tags/11/reviews?page=':

cate = '`洗臉`'

elif category == 'http://www.urcosme.com/tags/12/reviews?page=':

cate = '`卸妝`'

elif category == 'http://www.urcosme.com/tags/13/reviews?page=':

cate = '`化妝水`'

elif category == 'http://www.urcosme.com/tags/14/reviews?page=':

cate = '`乳液`'

elif category == 'http://www.urcosme.com/tags/15/reviews?page=':

cate = '`乳霜`'

elif category == 'http://www.urcosme.com/tags/16/reviews?page=':

cate = '`凝霜`'

elif category == 'http://www.urcosme.com/tags/17/reviews?page=':

cate = '`凝膠`'

elif category == 'http://www.urcosme.com/tags/18/reviews?page=':

cate = '`前導`'

elif category == 'http://www.urcosme.com/tags/19/reviews?page=':

cate = '`精華`'

elif category == 'http://www.urcosme.com/tags/20/reviews?page=':

cate = '`面膜`'

elif category == 'http://www.urcosme.com/tags/21/reviews?page=':

cate = '`多功能`'

elif category == 'http://www.urcosme.com/tags/26/reviews?page=':

cate = '`臉部防曬`'

elif category == 'http://www.urcosme.com/tags/27/reviews?page=':

cate = '`身體防曬`'

elif category == 'http://www.urcosme.com/tags/28/reviews?page=':

cate = '`妝前`'

elif category == 'http://www.urcosme.com/tags/29/reviews?page=':

cate = '`遮瑕`'

elif category == 'http://www.urcosme.com/tags/30/reviews?page=':

cate = '`粉底`'

elif category == 'http://www.urcosme.com/tags/31/reviews?page=':

cate = '`定裝`'

elif category == 'http://www.urcosme.com/tags/32/reviews?page=':

cate = '`眉彩`'

elif category == 'http://www.urcosme.com/tags/33/reviews?page=':

cate = '`眼線`'

elif category == 'http://www.urcosme.com/tags/34/reviews?page=':

cate = '`眼影`'

elif category == 'http://www.urcosme.com/tags/35/reviews?page=':

cate = '`睫毛`'

elif category == 'http://www.urcosme.com/tags/36/reviews?page=':

cate = '`頰彩`'

elif category == 'http://www.urcosme.com/tags/37/reviews?page=':

cate = '`修容`'

elif category == 'http://www.urcosme.com/tags/38/reviews?page=':

cate = '`唇彩`'

elif category == 'http://www.urcosme.com/tags/39/reviews?page=':

cate = '`美甲`'

elif category == 'http://www.urcosme.com/tags/40/reviews?page=':

cate = '`多功能彩妝`'

db = pymysql.connect(

host='xxxxxx',

user='xxxxxx',

passwd='xxxxxx',

database='xxxxxx',

charset='utf8',)

cursor = db.cursor()

sqlstr = "SELECT * FROM %s WHERE 內文 = '%s'" % (cate,ct)

try:

cursor.execute(sqlstr)

results = cursor.fetchall()

if len(results) == 0:

try:

cursor.execute('INSERT INTO %s (`標籤名稱`, `主圖片`, `內文`, `內文圖片`)values("%s","%s","%s","%s")'%(cate,ttt,imgg,ct,ci))

except:

print("此文章有特殊字元")

db.commit()

db.close()

else:

print('已有重複文章')

db.commit()

db.close()

except:

print("此文章有特殊字元")

db.commit()

db.close()

接下來要做的事情:

1.將程式完善出來並繼續優化

2.繼續測試並找出錯誤

3.寫一個app提取資料庫內如並且顯示出來

留言列表

留言列表